Continuing On

In the previous section, we covered what this actual plan will do and how to create a basic ClickHouse service utilizing Aiven’s or ClickHouse’s DBaaS offering. In this section, we will start the process of getting the data into ClickHouse and getting replication set up between it and BigQuery.

GitHub Repository

Throughout this article, I will reference a Cloud Function and a BigQuery job. The source code for these two artifacts are located here in this GitHub repository.

Note this code is very simplistic by design and is built as a learning piece. So there is a very high chance it will need to be customized to provide real-world usage for your scenario.

Getting Data Out of BigQuery to ClickHouse

There currently are no automated methods of doing CDC or streaming data out of BigQuery into an arbitrary destination. This means that data must be either captured before inserting it into BigQuery or extracted after it has been inserted to capture it. The lack of any official method makes this a very in-depth subject. Due to this, I will be covering only batch loads in this article.

That’s discussed in the next section, but first, we need to get the initial dataset out of BigQuery and into ClickHouse to create our baseline.

BigQuery has an export function that is the easiest way to get data out of it into somewhere else. There are two key disadvantages of using it though: it can only export a single table at a time and it can only target GCS for storing the data.

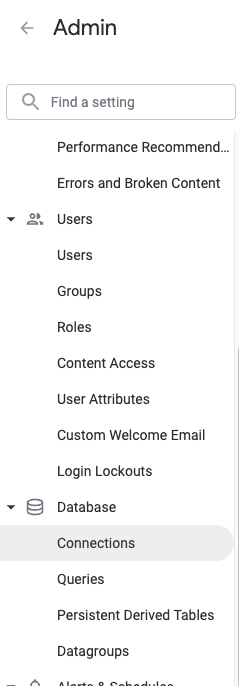

Due to the limitations of a single table at a time now is a good time to determine if all tables need to be replicated into ClickHouse or which subset of tables are good candidates to replicate over for usage by Looker.

A quick way to list out all tables is to run the following query against your dataset which will spit out the whole list for you. Additionally, if you are unsure which tables are used very often this query will list out the count of queries that have hit each table in the dataset. Note this query can cost a significant amount of money, so make sure you check the cost estimate in the UI first then adjust the number of days it scans before running it.

When going to do the actual export the best way to do this is to use the bq CLI command and grab a whole table. The reason why I recommend this is because utilizing the “EXPORT DATA…” SQL command will incur processing/scan charges for the amount of data exported, or slot charges for the export if using Editions, whereas the CLI command, or the API call, will just dump the whole table without additional charges.

Now if you want only part of a table, which normally is just a set of partitions of a table, then there is a trick you can do using the bq cp command to copy a partition to a new table that can be loaded directly into ClickHouse. Unfortunately, this command won’t work with wildcards or more than one partition at a time so you must run it per partition, this can be relatively easily scripted but I want to provide the command to do this here:

bq cp –append_table=true `<source_project_id>:<source_dataset>.<source_table>$<source_partition_name>` `<target_project_id>.<target_dataset>.<target_table>`

If it’s not partitioned (and not a massive table) I just recommend loading the whole table into ClickHouse and pruning it from there using SQL so you don’t incur processing charges on BigQuery.

When you are ready to do the initial export then let’s carry on.

I am going to be dumping the data out into the parquet format since it is the closest file type to the filesystem BigQuery uses and is easily loaded into ClickHouse with the column types intact.

The command to export a table from BigQuery is:

bq extract — destination_format=PARQUET \

<project_id>:<dataset>.<table_name> \

gs://<bucket_name>/<path_and_filename>

(Note on this if you are putting the files into a sub-folder make sure it exists in your bucket or else you will get a very obtuse error message about positional arguments)

Once a table has been exported to GCS then it’s time to load it into ClickHouse and thus we continue our journey.

Loading the Initial Data into ClickHouse

When loading into ClickHouse the official SQL query to run is this (at the time of the writing):

CREATE TABLE <table_name>

ENGINE = MergeTree

ORDER BY tuple() AS

SELECT *

FROM S3Cluster(default,

'https://storage.googleapis.com/<bucket_name>/<path>/*.parquet');

Note: there is a bug in some versions of ClickHouse where the above query will fail, but will still create the table. To get around this just do an INSERT INTO